The Myth of the Fancy Typewriter

You have probably seen it a million times. You start typing a text message to your friend, and your phone suggests the next word. If you type – I am on my – it might suggest – way. – This is basic auto-complete, and for a long time, people thought Large Language Models (LLMs) like ChatGPT or Claude were just bigger, slightly more expensive versions of that. They called them – stochastic parrots, – implying they just repeat patterns without understanding anything. But if you have ever asked an AI to explain a messy piece of Python code or why a specific physics formula works the way it does, you know that is not the whole story. We are moving far beyond simple word prediction. We are entering an era where AI acts as a logical translator.

For anyone between the ages of 10 and 20, this is the ultimate superpower. Whether you are trying to beat a difficult level in a game by understanding its mechanics or trying to survive a high school computer science class, LLMs are the key to breaking down walls of complex logic. Let us dive into how these models actually work under the hood and why they are much more than a fancy typewriter. If you want to stay updated on more tech tips, feel free to visit our Home page.

Why Auto-Complete Is a Bad Comparison

To understand why LLMs are special, we first have to look at what they are not. Your phone’s keyboard uses something called an N-gram model. It looks at the last one or two words and guesses the most likely next word based on a huge database of common phrases. It does not – think – about the context of your conversation. It just knows that – Good – is often followed by – morning. –

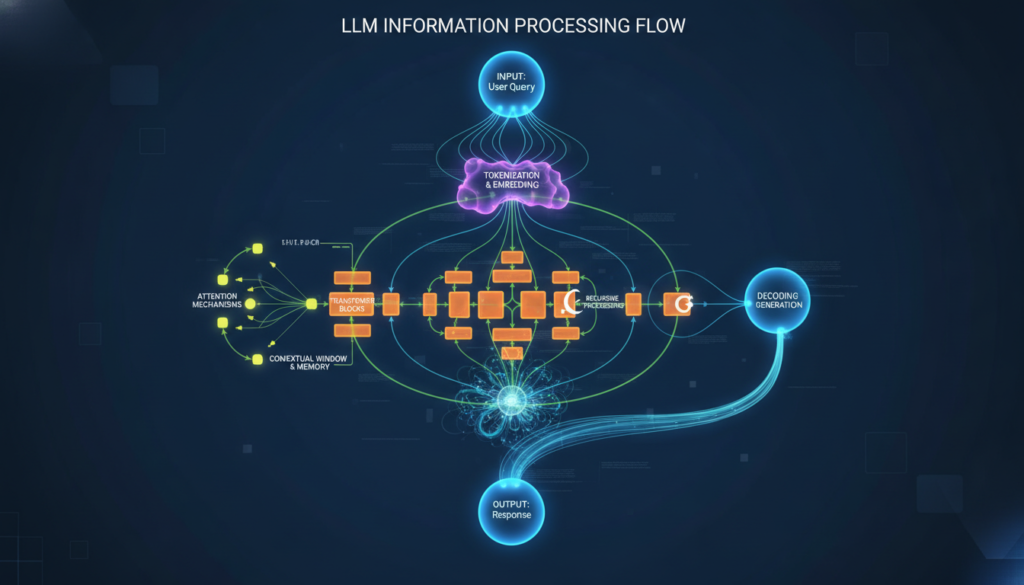

Large Language Models, however, use something called a Transformer architecture. Instead of looking at just the last word, they look at the entire paragraph or even the entire book you have provided. They use a process called – Attention – to decide which parts of your prompt are the most important. If you ask an LLM to explain a complex – if-else – block in a script, it is not just guessing words. It is analyzing the relationships between every variable and function in that block. It is more like a world-class chef who understands how every ingredient reacts with the others, rather than a vending machine that just gives you a soda when you press a button.

The Power of Context

When you use tools from companies like OpenAI or Anthropic, you are interacting with billions of parameters. These parameters have – learned – the logic of human language and the logic of programming code. Because they have seen billions of lines of code on sites like GitHub, they recognize the intent behind the logic. They do not just see text; they see a roadmap of instructions. This is why they can explain – why – a specific loop is causing a memory leak instead of just suggesting you add a semicolon.

Turning Gibberish into English: The Logic Translator

Have you ever looked at a piece of code or a math proof and felt like you were staring at ancient hieroglyphics? We have all been there. You find a solution on the internet, but you have no idea how it works. This is where the – explain – feature of LLMs becomes your best friend. Instead of just copying and pasting, you can ask the AI to – explain this to me like I am a gamer – or – use a sports analogy. –

For example, if you are struggling with a nested loop in JavaScript, a simple auto-complete tool would just finish the brackets for you. But an LLM can tell you: Think of the outer loop like a clock’s hour hand and the inner loop like the minute hand. For every one time the hour hand moves, the minute hand has to go all the way around. Suddenly, that confusing block of code makes sense. It has translated dry logic into a mental image you can actually hold onto.

Real-World Example: Regex Patterns

Regular Expressions (Regex) are notoriously difficult to read. They look like a cat walked across a keyboard. A pattern like ^([a-z0-9_.-]+)@([da-z.-]+).([a-z.]{2,6})$ is enough to give anyone a headache. An LLM can break this down piece by piece, explaining that the first part looks for the username, the second part looks for the domain, and the third part ensures there is a dot followed by an extension like .com or .org. This is logic explanation in action, and it is a massive step up from just filling in the blanks.

The Rubber Ducking Strategy 2.0

In programming, there is a famous concept called – Rubber Ducking. – The idea is that if you explain your code out loud to a rubber duck on your desk, you will eventually find your own mistakes. It forces you to think through the logic. With LLMs, the duck can finally talk back. This is what we call interactive debugging.

When you ask an AI to explain complex logic, you can have a back-and-forth conversation. You can say, – Wait, I do not get why you said the variable should be global here. – The AI will then rephrase its explanation. This creates a feedback loop that speeds up learning by 10x. You are not just getting an answer; you are getting a private tutor that never gets tired of your questions. To learn more about how tech is changing education, check out our Home page for the latest updates.

The Limits: Why You Still Need a Brain

As cool as LLMs are, we have to talk about the – Hallucination – problem. Sometimes, because the AI is so focused on being helpful, it will confidently explain a piece of logic that is completely wrong. It might invent a function that does not exist or give you a math explanation that sounds logical but leads to the wrong answer. This is why you should use AI as a collaborator, not a replacement for your own brain.

Always verify the logic. If the AI explains a piece of code, try to run that code. If it explains a physics concept, see if that matches what your textbook says. The magic happens when you combine the AI’s ability to summarize and explain with your ability to think critically and test things out. Think of the AI as a GPS. It is great at giving directions, but you are still the one driving the car. If it tells you to drive into a lake, you should probably hit the brakes.

Tips for Mastering Logical Explanations

If you want to get the best explanations out of an LLM, you need to know how to ask. Here are a few pro-tips for your prompts:

- Define the Persona: Tell the AI who it is. – You are a senior software engineer who is great at explaining things to beginners. –

- Set the Format: Ask for a bulleted list or a step-by-step breakdown. This prevents the AI from giving you a – wall of text – that is hard to read.

- Ask for Comparisons: Say – Explain this logic by comparing it to how a pizza delivery system works. – Relatable analogies are the secret sauce of learning.

- The – Why – Prompt: Instead of asking – What does this do? – ask – Why did the author choose to use this specific logic here? – This gets you into the mindset of the creator.

Conclusion: The Future is Conversational

We are just at the beginning of what Large Language Models can do. As they get better at reasoning, they will become even more integrated into how we learn and create. They are moving from being tools that write for us to tools that think with us. By using AI to explain complex logic, you are not taking the easy way out. You are using a high-tech tool to broaden your understanding and tackle harder projects than ever before.

So, the next time you run into a brick wall of confusing code or a logic puzzle that makes your head spin, do not just look for an auto-complete fix. Ask for an explanation. Challenge the AI to make it simple. And most importantly, keep experimenting. The tech world moves fast, and being the person who knows how to use these tools effectively will put you miles ahead of the competition. If you enjoyed this guide and want to see more content like it, remember to visit Home frequently!